A New XR Project

In April 2020, we started working on a new AR project for a large entertainment center in Las Vegas. The project was a treasure hunt-like experience to be deployed as an interactive installation.

During the initial discussions, iPhone 11 Pro Max was selected as the target platform. The devices would be encased in custom-designed handheld masks to amplify the feeling of immersion into the whole theme of the venue.

We chose that specific model of the iPhone for the horsepower necessary to handle the demanding particle-based graphics. Also, the phone had a reasonably sized AR viewport at a relatively low weight of the entire setup.

Considering the project’s scale, its high dependency on the graphical assets, and our pleasant experiences with developing the Magic Leap One app for HyperloopTT XR Pitch Deck, we selected Unity as our main development tool.

The Heart and Soul of the Experience

In the AR experience, the users would rent the phone-outfitted masks and look for special symbols all around the dim but colorfully lit, otherworldly venue. The app would recognize the custom-designed symbols covered with fluorescent paint and display mini-games over them for the users to enjoy.

We already had several challenges to solve.

The main one was detecting the symbols — there was nothing standard about them in terms of AR-tracking. Also, the on-site lighting was expected to be atmospheric, meaning dark — totally AR-unfriendly.

The games themselves were another challenge. Users could approach and view the AR games from any distance and at any angle (e.g., over any background). The graphics would consist mostly of very resource-intensive particle effects, as it was the theme of the app.

Plus, we only had a couple of months to develop the AR experience.

There was also one big question left “how are the users going to interact with the app?”

Enter Hand Gestures

In the initial brainstorming, we considered the most obvious, i.e., touch screen interface as well as various 3rd party controllers and other methods, which we describe in selected mobile augmented reality app interaction methods.

Another option that came up was hand tracking. We recalled how even the simple gesture support in Touching the Music made the experience straight out magical for the users. That was a very tempting reason to include it.

But then we realized a few important differences between the two contexts — playful vs. purposeful interactions.

Playful vs. Purposeful Interactions

In Touching the Music, you could only interact with the content in a very limited way. You could touch or pinch animated objects hovering in the air to get a nice reaction. There were no consequences for performing a gesture incorrectly (which was not that hard, by the way). In case of a mistake, the objects still did something on their own, so the users didn’t feel punished for not getting the Kung Fu-like move perfectly.

The new project included games that required precision — if a gesture wasn’t recognized correctly often enough, the user could lose. Being gamers ourselves, we understood how unforgiving interfaces could frustrate and downright spoil the game. We didn’t want our users to experience either.

Headsets vs. Handhelds

Another important difference was the hardware platform. Touching the Music was targeting Magic Leap One, a headset. The new project was supposed to run on an iPhone embedded in a handheld mask.

In the first scenario, the users had both hands free, which made the interaction more natural. In the other, that was no longer the case. The awkwardness of making gestures with one hand in front of a device that is held in another turned out to be another issue, and still not the biggest one.

In Search of the Unicorn

After reviewing the available methods, the client deemed the touch screen interface (the only 100% reliable way of controlling the application) unacceptable. The client wanted to build something special and memorable.

When the hand gesture-based input methods turned out extremely difficult to achieve, we set out on a journey to find controllers that would make the AR experience engaging and entertaining. What gave the journey an additional twist was the tight deadline — we had a few weeks to spend on the input method, as there were many other features to deliver.

Read our deeper analysis of selected mobile augmented reality app interaction methods.

ARKit 3 Body Tracking

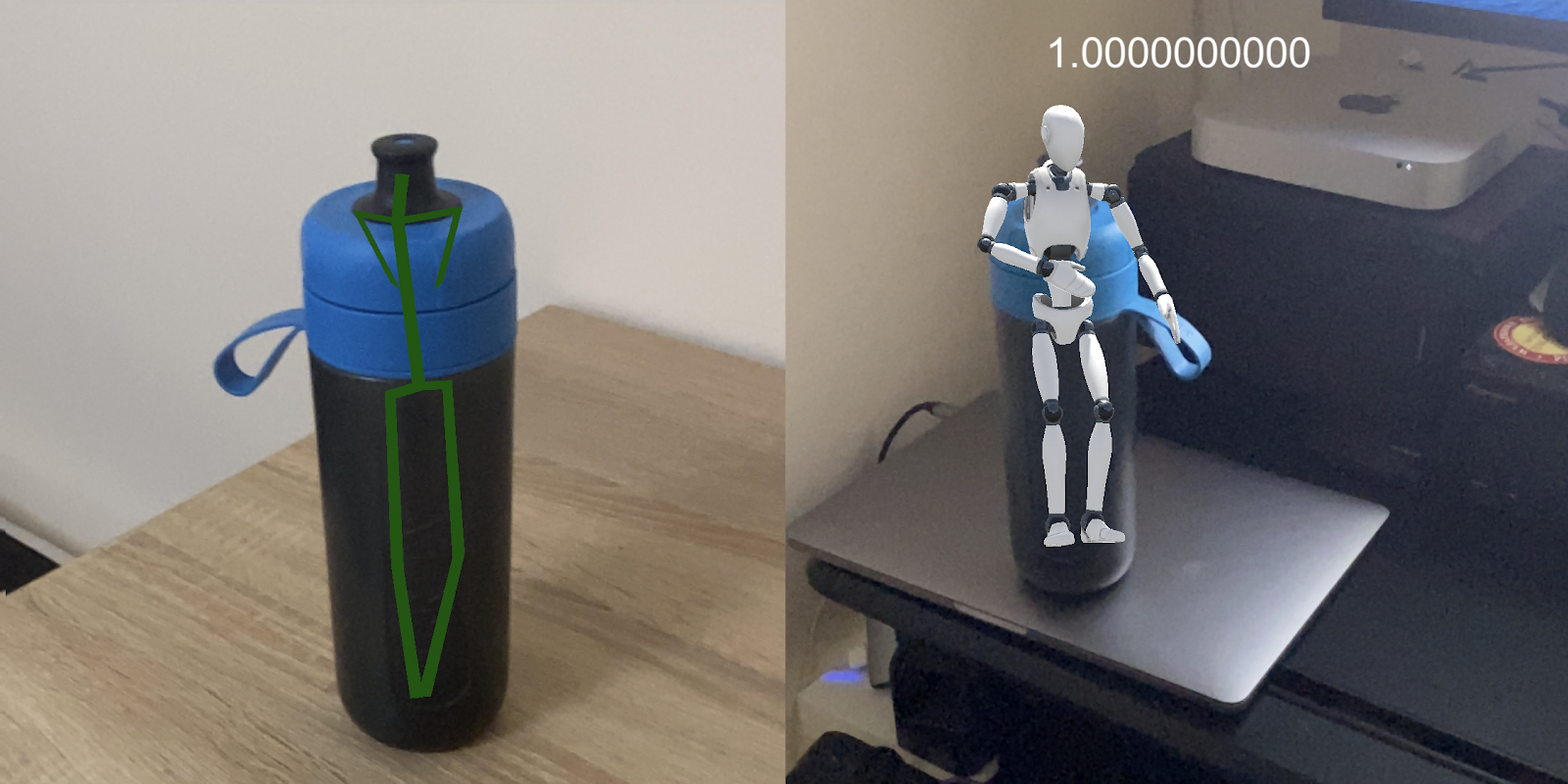

The very first thing we tried was iOS’s very own ARKit 3, which had this cool new feature called body tracking.

ARKit3 body tracking was a promising solution.

So we built a sample app that would display a skeleton or a mannequin over a person.

We were just about to start testing when...

Wait, what just happened?

We encountered several false positives similar to the ones in the picture, e.g., shadows on the walls, paintings, various decorations being mistakenly identified as humans. That was not good, but we weren't ready to throw in the towel just yet.

Our plan was to track the hand of the person holding the iPhone. Since the hand would be at a rather specific distance from the camera, it would also be in a certain size range. We could potentially use that information to filter out the possible false positives. Not trivial, but possibly solvable.

Unfortunately, we quickly realized that the feature was called “body tracking” for a reason. Even though it could detect a skeleton based on a full image of a person (and other objects), it wouldn’t detect just the arm without the context of the body.

Body tracking looked great and was easy to set up, but it wouldn’t work with hand tracking. We had to look for alternatives.

Google MediaPipe

Our second lead was Google MediaPipe, which seemed to offer very impressive capabilities and covered our case specifically. There were still some challenges with false positives like detecting other people’s hands, but we could try to address those in a similar way to the one we planned for body tracking.

During our early attempts, we couldn’t get the project with MediaPipe to build for iOS 13. Later, near the end of the project, we tried again and were successful. The accuracy of the solution was very impressive. But we still didn’t use it, because the quality of tracking degraded significantly in the low-light conditions close to the ones that we expected in the production environment.

Microsoft Mixed Reality Toolkit

We continued our research by testing solutions from major tech companies. And so we found another SDK in that category, namely Microsoft’s Mixed Reality Toolkit.

Microsoft’s SDK was an interesting option as it supported Unity out-of-the-box. But it turned out that the SDK was an abstraction for the capabilities of the different platforms. Since hand tracking was not available natively on iOS, MRT couldn’t expose that functionality there.

We had to look elsewhere.

Non-Standard Problems & Creative Solutions

We now knew our requirements were quite specific and very challenging due to dim colored lighting at the venue. The tested solutions were dependent on computer vision algorithms — lighting had a big impact on the accuracy and quality of tracking.

Thinking about what else was there, we had to narrow down what it was that we really needed to make the interactions with the experience smooth and fun.

During subsequent discussions, we figured out that hand position was the crucial piece of information we needed. Once we had that, we could try to figure out a way to determine users’ intention, e.g., did they mean to touch something or were they just moving the hand to another position, based on other cues.

Since we only needed the position, we figured we could track a gadget like a ring or a bracelet, which would be more distinct and easier to track than a hand.

With that in mind, we could select fluorescent or glowing gadgets to address the low-light part. So we ordered a truckload of various Halloween props (they were actually perfect for the theme!) from rings to bracelets, sticks, and even gloves.

Equipped with that paraphernalia, we tried using ARKit object tracking, but we quickly realized that it wasn’t going to work.

Based on an article we found, we still considered using computer vision for color blob tracking, but that meant exactly what we wanted to avoid — reinventing the wheel.

Just recognizing a blob of color probably wasn’t a very difficult task, but getting something to work and getting it production-ready, especially considering the difficult conditions, are two different things.

We didn’t have that much time left.

Once More Unto the Breach!

We found ourselves closer to the deadline with a better understanding of the challenge ahead of us, but unfortunately no closer to a viable solution.

Since our app also required some form of interaction, in parallel to our hand-tracking research, we continued working on the screen touch interface and checking the available 3rd party controllers.

At that point, hand tracking was still the expected outcome. Since none of the free solutions worked for our use case and implementing a custom one was too risky, we decided to give a paid solution a try.

The product was called ManoMotion. It offered hand tracking with gestures that seemed on par with Google MediaPipe. ManoMotion had Unity SDK and public samples available that we could evaluate.

The integration was easy. At first sight, it looked like it could work. But then we remembered the degraded tracking in low-light conditions from the previous attempts.

We contacted ManoMotion and described our case. They responded by mentioning that they have worked on a similar case before and that they could adjust the algorithm to the specific conditions at the venue.

We decided to build some prototypes. The accuracy of the basic SDK (not the Pro version, no custom modifications) was around 70% in well-lit conditions, which wasn’t perfect, but promising. ManoMotion later sent a demo based on footage in on-site conditions. The demo offered similar results in the production conditions, which was really impressive.

But we wanted to figure out how to improve the base accuracy — we were still comparing the results to our Magic Leap One experience. Even though the hand tracking wasn’t perfect because the hand needed to remain within the camera's field of view, which required some getting used to, it seemed to work better.

The reason was the distance of the hand to the camera. With the handheld mask, the distance was shorter so the effective tracking area was smaller.

This meant that creating an intuitive hand-tracking experience for mobile required much more effort, as the users needed to be made aware of the limitations without breaking the immersion.

Hand tracking was still that magical element that everyone involved wanted to include in the experience. But it became clear that we wouldn't be able to make the app controllable in that way in the amount of time we had.

The user experience just wouldn’t be good enough based on the project's quality standards.

Summary and Conclusions

Although regretfully, the first release of our app didn’t include hand tracking, as we ultimately used the reliable, but not as cool, touch screen interface.

But we see a potential to include hand tracking in future updates. If we decide to revisit that idea, we will most likely pick up from where we left off with ManoMotion as it seemed to be the most promising solution for our use case.

For other projects where the expected lighting conditions are AR-friendly (I’m not sure if we can actually make that assumption in the world of AR unless we consider specifically location-based experiences) Google MediaPipe might be a great choice as well.

Based on my experience with XR, I strongly believe that hand gestures are the future of interactions (even if it means wearing gloves or similar gadgets). You can actually see on people’s faces how well they respond to them when offered such an option.

Related articles

Supporting companies in becoming category leaders. We deliver full-cycle solutions for businesses of all sizes.

.png)